We have been using artificial intelligence (AI) for many years, perhaps without realising it. Automatic autocorrect has been a feature on Microsoft Word since 1993. Many households have an Alexa or similar smart home device. If you use Google Maps on your commute to work, it’s using AI to monitor traffic and suggest alternative routes.

Social media platforms including LinkedIn, Instagram and TikTok use AI to ensure that you only see the posts most relevant to you. The more active you are on a particular platform, the more AI begins to learn your preferences. After a short time, what you see on your individual feed becomes tailored to you. On the contrary, AI is also responsible for posts receiving low impressions and not being pushed out to a wider audience.

AI, is a type of machine learning that allows a computer to fulfil a command without requiring exact instructions. The computer “thinks” and responds like a human by using algorithms that harness real-time data to simulate human intelligence.

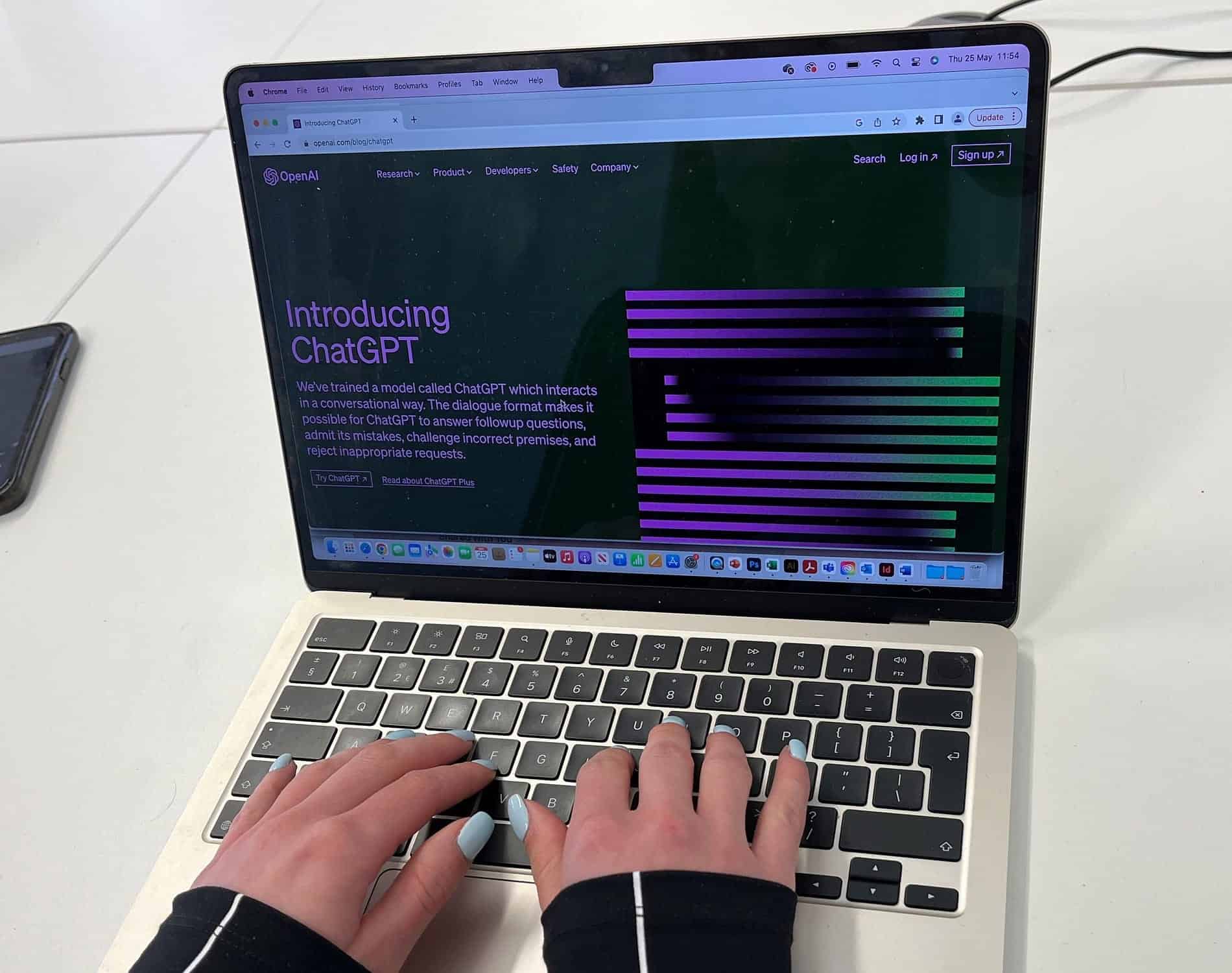

In recent months, AI has been brought to the forefront after the rolling out of several advanced chatbots including OpenAI’s ChatGPT. GPT, short for generative pre-trained transformer, is an artificial neural network roughly modelled on the neurones in the human brain. GPT can produce human-like language by learning from what humans have inputted previously.

Chatbots use generative AI to answer questions or commands from humans in whichever format is specified, for example, a blog article, an image, a poem, or a recipe. Many large companies have been using simple chatbots on their websites for many years, perhaps the most frequently used chatbots are customer support bots that use AI to provide an immediate answer to FAQs with more advanced questions requiring the user to be connected to a human.

Since the release of ChatGPT in November 2022, well-known search engines and tech providers including Bing, Grammarly and Canva have integrated generative AI into their software.

AI chatbots are still in the early stages of learning. But it won’t be long before it’s difficult to tell the difference between what has been written by a human and what has been written by AI.

AI in the Life Sciences

An article by Attivio predicts that life science employees spend as much as 36% of their day looking through data for information. AI-powered searches can make sense of vast amounts of data almost immediately.

In February 2023, Imperial College London launched the I-X Centre for AI in Science. In a statement, they said, “We believe that some of the most critical discoveries in the future will be as a result of the application and development of AI techniques to Engineering, Natural and Mathematical Sciences.” The UCL Centre for Artificial Intelligence has close research and training links to local AI communities, including the Gatsby Computational Neuroscience Unit.

AI is being used to improve efficiency in the drug discovery process by analysing how different molecules interact with one another.

How not to use AI

Whether using generative AI is plagiarism has sparked multiple debates and has led to a new term “AIgiarism” or AI-assisted plagiarism. After backlash from academic institutions, ChatGPT creators OpenAI are working to add a watermark to the chatbot’s output to make AIgiarism easier to identify.

In addition, passing off AI-generated content as your own could harm your SERP ranking. Google guidelines suggest that AI users should be transparent with the reader when AI-generated content has been used on their website to maintain good SEO practices.

Maintaining your brand identity

Generative AI can write a 3-page article about any topic within seconds but it’s generic and lacking unique style and voice. Chatbots can’t imitate your brand’s voice because they don’t have the years of experience working with your team, products, services, and customers behind them. Therefore, brand identity can get lost or no longer resonate with the target audience. You know the problems that your customers face and how to best solve them, AI doesn’t.

AI can’t replace specialist knowledge

AI imitates human intelligence by pulling information from the internet. It lacks the the background, skills and experience that humans offer. AI is, therefore, only as good as the information that it has available to it. This means that the material that it generates is open to errors and misinformation and can lack depth.

Final Word

AI is a useful tool that can help to guide decisions, but it shouldn’t be used to make them. Most AI needs to be guided by a human to make the right decisions. It’s a great tool to assist in proofreading an article, quickly providing a broad overview of a complex topic, or helping to gather and disseminate data, but in most cases, AI can’t be used in place of a human… yet.